I've seen a few examples of text-to-image synthesis outputs in my twitter and instagram streams of late. This weekend, a friend posted some images he'd made. Or rather for which he'd supplied prompts to the two models that then work together: VQGAN & CLIP.

I'm not fully up to speed (understatment) on the deep stuff around how these machine-learning models work, but in a nutshell it seems that VQGAN (Vector Quantized Generative Adversarial Network) makes a stab at an image based on your text (that's mind blowing enough) and then CLIP (Contrastive Image-Language Pretraining) judges the match between the image and the prompt giving feedback to VQGAN to have a go at improving it - when the scores settle down, an image is ready for output (I think...) So given the simple prompt "banana" VQGAN (how?!?!?) makes a picture of what it "thinks" is a banana and CLIP says, "yeah I'm like 0.1% sure that's a banana, can you do better?" and VQGAN doesn't get upset it just keeps trying until it gets a better score, over and over and over again. That's probably wrong in parts and over-simplified in others, but it's how I'm understanding it.

Anyway it makes cool pics based on words (except sometimes it doesn't, sometimes it just gives up). And the thing my friend pointed to was a tool at hypnogram.xyz for doing just that.

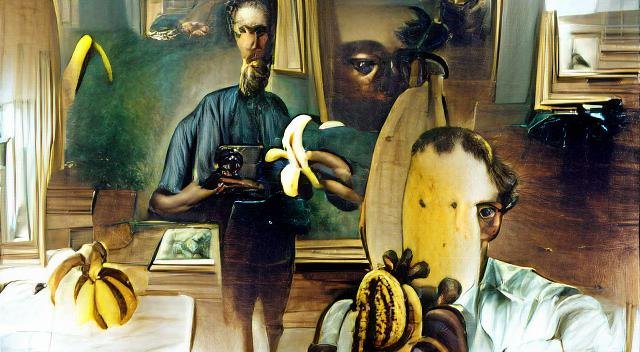

My initial attempts probably reveal more about my subconscious and the surreal way my brain works when asked for a prompt, but anyway, I like 'em. (Now of course, I realise, they're probably crying out to be NFTs!)

Lloyd Davis Self-Portrait With Banana

Satan by John Constable

Dali - Margaret Thatcher riding a tricycle eating spaghetti

Titian - Nigel Farage,Boomtown Rats eating ice cream

Rosetti - your mum in a pub with sailors

Cartier Bresson - two angels sliding down bannisters in Grimsby

Makes you wonder how a computer would perceive the world.