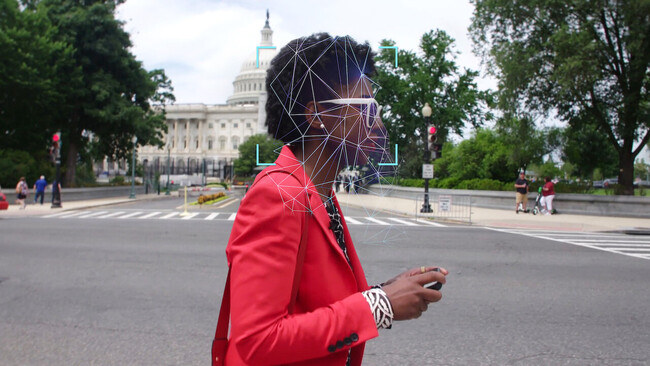

It all starts with a disturbing realization, but one that can be reduced to anecdote: an African-American researcher, Joy Buolamwini, realizes that a facial recognition program does not distinguish or identify her face as that of a quantifiable person for its database. But it does when she puts on a neutral mask... and white.

This is the starting point of a documentary that pecks at many topics, all based on the arbitrariness and lack of ethics with which algorithms collect information to shape their databases and the knowledge with which they fatten different AIs. An arbitrariness that takes shape from prejudices that we all have and that make that, for example, and as one of the participants in this interesting documentary says, racism is mechanized and replicated.

At another point in the action, the documentary recounts the experience of a young Chinese woman with the system of constant surveillance and identification in her country, and the social credit system. Next, an American expert states that the system is not so different from Western surveillance of citizens through social networks... but that at least in China the government recognizes it.

In this way, the documentary travels continuously around the world, asking questions about privacy, technology and how new computer models impose restrictions on us that in many ways we thought we had overcome. 'Coded Bias' does not have an univocal and indisputable discourse, but it does throw at the viewer a lot of questions that we need to ask ourselves (and above all, answer) urgently.

'Coded Bias' is part of a series of recent documentaries on technology that warn about the dangers of very similar issues: the loss of privacy, how technology inadvertently enters aspects that were once strictly the purview of humans and, in general, the risk of losing control of what we ourselves have created. Both 'The Great Hack' and 'The Social Networking Dilemma', which can also be seen on Netflix, focused on the uncontrolled disintegration of the private.

'Encrypted Bias' also gets into this theme, but as part of a larger problem: the abstract threat of a decentralized mathematical algorithm that learns, but learns wrong. And yes, it does enter into the subject of electoral manipulation (not always premeditated, and that is one of the most disturbing issues of the whole: there are no supervillains here, but failures of society as a whole), but also the erosion of individual rights, such as the use that British or American law enforcement forces make of databases that start from prejudiced data.

It is at these moments when the documentary plays with a tremendism that threatens to frivolize its discourse. Winks to films such as the adaptation of '1984' or the evil AI of '2001: A Space Odyssey', with a robotic voice speaking in first person, are nice but clash with the solemn seriousness demanded by what is being told. Luckily, 'Coded Bias' and its director Shalini Kantayya (one of the experts featured speaking in the film) do not forget that we are talking about technology, but above all, its impact on people.

"AI is based on data, and data is a reflection of our history," Buolamwini says in the documentary, and that is the essence of the somewhat bitter conclusion: AIs are biased because we people are full of biases. But on the other side of the coin, the documentary is optimistic and closes with a small triumph of the researcher against the mathematical machinery, with a nice technopoem and a call to action. It is in our hands to put a stop to many of the excesses that "Coded Bias" denounces, and from that point of view, despite the warnings, it knows how to go further than other documentaries of its style.