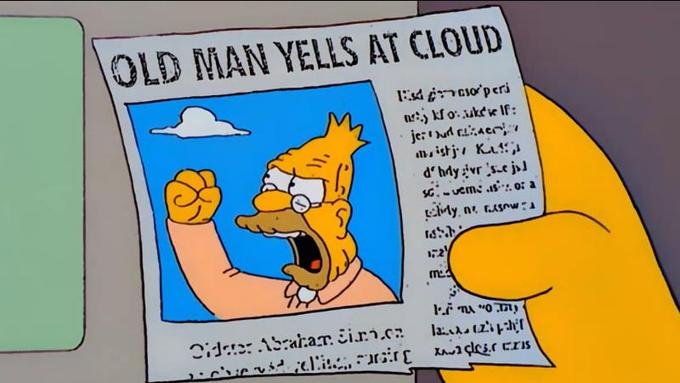

I have been harshly critical of A.I. slop and the corporate trend to label everything as "A.I.-enhanced," but I do think there is room for nuance. I'll try to explain my thought processes below, but the image should suffice for those with little patience.

Image source and more meme information

Ancient philosophers debated the merits of reading and writing ideas as opposed to discourse and recitation. Now we integrate debate and written treatises. Text allows us to engage with ideas from the past, almost like speaking with the dead. It also allows us to examine ideas at our own pace, exploring them as deeply as we like. Later philosophers transcribed earlier works by hand with commentary, so we can eavesdrop on old conversations, too. New technology meant new ways to learn and think.

More recently, the pocket calculator is younger than my parents. It was seen as a dangerous substitute for learning math. When I took calculus, we were limited to specific graphing calculator models for fear we might use more advanced machines as a crutch instead of a tool. Sure, some might use it to avoid learning basic addition or their times tables, but you need a basic understanding of mathematics to use a calculator, and in the end, it removes drudgery.

As an "elder millennial" or "xennial," I grew up in an analog world of encyclopedia sets and card catalogs, the rise and fall of CD-ROM multimedia encyclopedias, and the emergence of Wikipedia. I know the pros and cons of each. As a librarian for over a decade, I still value print as a medium of information and entertainment, but I also know the benefits of comparatively unlimited digital data storage. Dictionary errors persist until the next printing, and remain in old copies, while Wikis can be updated instantly. On the other hand, it's comparatively easier to spread hoaxes and lies online. But it also means perspectives outside government and corporate media can exist and spread. Technology has its place, and is neither rinherently good nor bad.

So, with that perspective, what do we make of generative artificial intelligence? This new technology is still a dynamic, developing technology. Like the early Internet, there is a lot of hype and a lot of tech bubble nonsense, but that doesn't mean it's all a scam.

People are using it to replace skilled accountants and generate reports. However, if you don't have a skilled human to check for hallucinations, you have no idea how good the data analysis is. Rumors swirl of completely fabricated data already leading to bad business decisions, and we know in the legal field, lawyers are submitting briefs which cite nonexistent case law. Remember, A.I. still doesn't know anything, and remains a sophisticated algorithm limited by its training data. You, the human, can truly learn.

This leads to another line of thought, though. Some people also report that the process of writing an A.I. prompt can help them find the answers they need on their own. This is just an update to the old technique of talking through a problem by explaining it to an inanimate object. And sometimes, A.I. can more efficiently trawl through its training data to find remarkably effective answers, so it's not like it's always wrong.

That said, I do see valid concerns about students using A.I. to complete school assignments. Education is not about how fast you complete assignments, but rather how well you develop your mind. The process of researching and writing for a paper is not as much about the document as it is about developing skills. We can't memorize the entirely of human knowledge, but we can know how to find answers. Prompts in ChatGPT, Grok, or Claude are not the same thing. Data is incomplete, but preliminary studies seem to show that even compared to old-school Google searches, A.I. results are far more shallow. Scans of people using prompts versus other tools suggest much less mental effort, and interviews after the fact suggest less information is retained.

As companies like Google and Amazon try to integrate text, speech, and A.I. assistants into every product, there is also a very real security concern. Data breaches are already commonplace, and people report Google and Alexa seem to be listening even when "off." Cloud computing and integrated A.I. multiplies these risks, to say nothing of how shady these mega corporations are, or how governments also tend to play fast and loose with surveillance.

Finally, remember when crypto was bad because it uses electricity and water for its mining? Now A.I. dwarfs the energy impact of Bitcoin, and it is growing so fast that computer chip production has shifted almost entirely to satisfying that industry. I'm no environmentalist wacko, but this seems wasteful.

In summary, I see generative A.I. in its current state as a limited tool with a handful of useful applications vastly overshadowed by a new tech bubble of speculation and waste, with a big splash of privacy concerns. Maybe it will mature. Maybe it will crash and burn. For now, be mindful and deliberate in how you use it, and don't trust our benevolent corporate overlords as they insist we need it integrated with every aspect of our lives.

$PIZZA slices delivered:

@jacobtothe(1/5) tipped @riverflows

Send $PIZZA tips in Discord via tip.cc!

At the moment, it's deeply problematic - but it'll get better exponentially, rather than crash and burn I feel. Whether 'better' is good for us is the problematic part..

I've heard of projects to make A.I. assistance able to cite sources properly for research. I've also seen stories of lawyers submitting legal briefs with their entire prompt conversations still embedded in their documents because they never even proofread the slop. It's a bit like crypto and NFTs: many utilitarian cases overshadowed by hype and grifters.

!BBH

!PIZZA

Should be instantly disbarred. Lazy a.f. Perhaps only as good as the tool who uses it. High school kids guilty of this too.

Couldn't agree more. How much has been made already by AI gimmick slop apps, basically vehicles for selling ads, and AI songs on spotify, Youtube ad revenue from AI videos etc. I think there's a little kickback. A friend of mine created an AI video for Instagram/Facebook for his 4x4 accessory company because he was time poor and skill poor and the customer base did not appreciate it because it lacked authenticity, which is ironically exactly what he's about. I hope there's kickback!

I do think it's moving exponentially and we can't possibly imagine what comes next, because our human minds just can't compute it.

Just imagine how much processing power must be used by gooners making A.I. porn because there somehow aren't already enough ways to see real naked women on the internet.