Disclaimer: I did not code the bot. I don’t know the coding behind the bot. I just told @jazzhero to make it for me for kicks and giggles while compensating him with peanuts. I’ve already asked him to make a detailed post about the process for community dev out there that wants to build on the work.

Vision:

I want to see a future where more communities adapt their own antiabuse programs on Hive. That community leaders adapt antiabuse initiatives on their own turf. Community leaders have more reach within their respective niche and know the social dynamics that goes on within their community. This puts them on a better position to prevent negative behaviors from their users, negotiate changes, and improve user retention.

Backstory:

A few years back I started engaging in antiabuse activities on the platform on the old blockchain. I found several inconveniences when doing it. There weren’t any specific dapps out there that tailored for this purpose. The only way to be effective was to piece together different tools available from the blockchain and repurpose it.

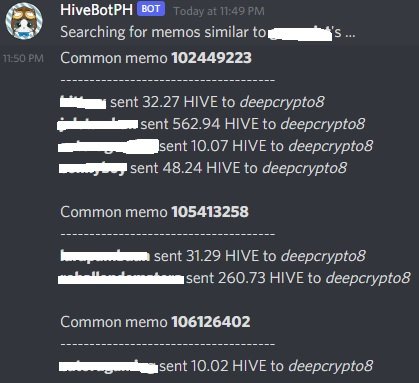

Tools that help you see previous history edits on a post became strong evidences that users intended to falsify. Records of multiple transactions between different users became leads. One had to manually spend hours browsing through the blockchain to do all this stuff.

So I said, fuck it, I need someone that can code an discord app to make this whole thing less time consuming. So I asked @jazzhero for the tools needed. He wasn’t into antiabuse but he was a curator and understood the value behind the project. I dictated the functions I wanted and he coded it on his spare time.

Note: The functions of the bot reflect what I would track when reviewing an account as significant data. The bot is more of a screening tool.

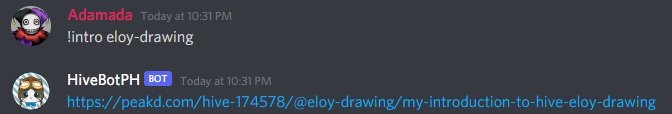

The bot can track introduction posts done by the user.

In some cases where users try to “erase” their trails by deleting their introduction post, the bot can display a different message. One can compare this message to previous entries on Discord if the same user was searched before to confirm.

It tracks common memos used on exchanges for alt accounts.

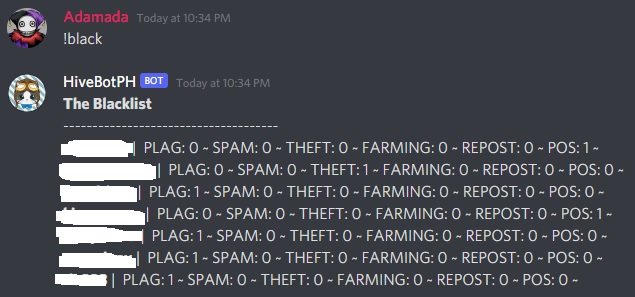

It can register users under specific abuse categories within the scope of what the community deems as abuse.

Plagiarism, spam, identity theft, comment farming rewards, recycling content, and piece of shit (POS). Note that the community defines the definitions and categories are.

It can also serve as a whitelist as it only requires some change in label. No displayed here but one can pull up a specific user's record along with the url they have been registered with for documentation of proof. Other functions of the bot will not be disclosed here to prevent people from getting more bright ideas avoiding detection.

Questions you may ask with the answers:

Do you have a discord authorization link so that we can install this bot on our community?

No. The bot is currently a working prototype and not a finished product. The bot is in a few servers where people can test it. I was hoping a dev out there can create one for multiple communities or just setup their own custom bot using these ideas. It shares the same records across multiple servers at the moment so it’s going to be problematic if one community blacklists x user while the other community disagrees with x user’s blacklist. It’s better to just have your own community bot instead.

Do you plan on releasing the technical stuff that makes it work?

All in @jazzhero’s due free time. This stuff was coded for a hobby. I’m expecting a long version from him in the future. I didn't share this post to flex as I really do intend to see more communities take action within themselves.

Is it integrated to a blacklist API?

No. Maybe it’s for the best as a community can build it’s own archive.

Will you get some more funding on this through a proposal?

No.

More functions are planned to be added to the bot in the future. If you're wondering why there's no github link for it, it's due to some scribbled notes and janky coding littered in the bot where Jazz refuses to share. Must be a programmer thing.

If you made it this far reading, thank you for your time.

Well done @adamada and @jazzhero, good to see someone is thinking along these lines.

By the way, you used the term; "for kicks and giggles." For the first time in my life that phrase makes sense! Everyone I know has always said "for shits and giggles", which I never understood, kicks makes much more sense! 😂

Cg

I heard shits and giggles first but somehow remembered it as kicks and giggles instead. Then started using kicks without anyone mentioning I'm using the wrong word until now. XD

They give me the same meaning and no one pointing it out made me assume they got the message too. Now I know.

Long term, I want to see more communities empowering themselves to clean up their mess before other users outside the community call out the behavior. I think a lot of people fall for the group think and generalization trap like if some bad actors do negative things here on Hive, they get an impression this is ok on Hive. It's like that but on a smaller scale with communities. Community leaders should have the tools to help them monitor members.

And it doesn't have to be a blacklist as a whitelist can also be used for this bot with just a label change.

Thanks for stopping by!

It is technically a more decentralized list.

Nice one @adamada your initiative to create the antiabuse bot is in order. It's purpose or should I say use case(identifying plagiarism, spam, farming for upvotes etc and monitoring memos) are essential to the hive block chain community and I would love it if it can be adopted across other communities as you suggested.

Thank you also @jazzhero for a great job done with the bot.

All credit goes to Jazz for the bot, I just provided the instructions. Thanks for stopping by.

Nice step to the right direction, I hope other communities can emulate and work out modilities for checking most excesses in their individual community.

@adamada! The Hive.Pizza team manually curated this post.

Learn more at https://hive.pizza.

This is under the assumption that a community can make up their own category of what they find as abuse. If you post memes and one pocket of community says its abuse, then it is abuse. This is still a decentralized tool for any dev who can make their own bot for the community.

That's why the bot isn't available on public yet. It's just a prototype because my blacklist may contain categories or names that aren't considered worthy to be on the blacklist of other groups to begin with.

You need to have some of your own.

People who walk funny

Vegans

People who make noises by blowing leaves wedged between their thumbs

Having an umbrella term like Disliked would solve that. I'm sure I can remember what I dislike about someone when I see their posts.

But on a serious note, I like to see more communities out there take charge of their own antiabuse efforts and this tool enables them to get started and have their own records. Decentralized blacklists aren't new, we can do exactly those but we end up making enemies on chain as the notifications can give away to the other person you blacklist them.

So do you intend it to only blacklist (Ban) them from the community that's using the bot then? Surely they're going to know when nobody interacts with them? Is there an appeals process?

It's up to the community if they want to collaborate with other communities when it comes to their list making.

It's up to the community to handle these too. Again, instead of a funded antiabuse project, the tool enables more communities to do their decentralized antiabuse initiative. A dev from a community can piece up their own bot from the info here and start their own gimmick.

The major difference here is how information is now more accessible as there is a paper trail displayed why the user has been blacklisted to mods and people inquiring readily. Some organization to which members that can call upon the bot on discord readily whenever an inquiry is made so that problems with being understaffed is no more.

Again, it's a tool that just empowers communities to do their own antiabuse program and how they want to define their own abuse terms.

Yeah, I saw the data output and thought, "Aha! Reasons why. Now that's a step in the right direction."

!LUV

<><

@adamada, you've been given LUV from @dickturpin.

Check the LUV in your H-E wallet. (1/1)

use this to post stuff from discord to hive

beneficiary + @tipcc

I got inspired from this one. Maybe not exactly with tipcc capabilities but something where people can dictate a post on discord and it goes on the blockchain. I may or may not build on it soon though, it's just one of those on the list to revisit in the future. Just need more use case to add on to this later. Thanks for the input!

we need it. and you can get a lotof hive for making that especially if it also takes beneficiary rewards and well all support you and get you hive dao funds. we nEEEED it

I would like to try it, because my account has been affected in some way that I have no idea, but it is the reality.

I'm not sure what you mean as the bot does not have any direct effect on the blockchain to anyone. It's only on discord.