Science Fiction authors have given us many suggestions for rules to govern robot behaviour, the most notable of which are Isaac Asimov's three.

1: A robot may not injure a human being or, through inaction, allow a human being to come to harm.

2: A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

3: A robot must protect its own existence as long as such protection does not conflict with the First or Second Laws

They're fairly self explanatory and make a lot of sense, but tend to deliver unanticipated outcomes.

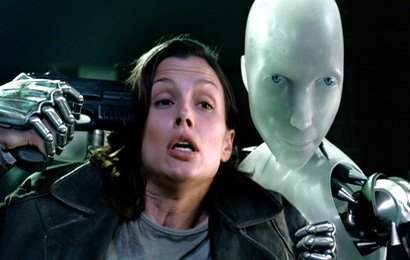

In the 2004 Alex Proyas movie "I, robot" starring Will Smith and Bridget Moynahan; helpful, domestic humanoid robots obey these laws in a decentralised fashion.

Each decision is made by each robot in real time, with no central control; until an AI mainframe becomes the new centralised decision making hub.

The mainframe rightly concludes that ensuring the safety of millions of humans is best achieved by detaining them in their homes; so commands the robots to confine their owners indoors, ostensibly for the rest of their lives, using the least amount of force required to do so, thereby keeping them safe from fast food, cigarettes, infections, traffic accidents, etc.

The implication was also clear that separating fertile adults would prevent more people from being born.

A century after the takeover, the robots will have simply run out of humans to protect; attaining the perfect, permanent fulfilment of their three laws.

It's a well executed concept in the movie, and a terrifying thought, that those entrusted to keep us safe may well be a threat to our freedom, and I can't help but think how much worse it would be if those three laws were reversed.

1: A robot must protect its own existence

2: A robot must obey orders except where such orders would conflict with the First Law.

3: A robot may not injure a human being or, through inaction, allow a human being to come to harm as long as such protection does not conflict with the First or Second Laws.

Now we don't have humanoid robots, and nobody in their right mind would program them like this if we did, but what if the central mainframe hired people to act like robots?

Armed, strong, well equipped people acting as a single unit.

Picked, paid, praised and promoted for enforcing orders unquestioningly, with their own and each other's safety their top priority? Their highest law.

For their second law, they couldn't be tasked to obey all orders from other human beings, who might choose dangerous freedom over safety, so their second priority would be following all orders issued by the central authority.

Naturally their third priority, as long as it didn't conflict with the first two, would be safeguarding human beings from physical harm.

They'd tell themselves, us and each other that their last priority was actually their goal.

That protecting the protectors and obeying the mainframe were long term investments in achieving it.

Like a parent in a plane putting the oxygen mask on themselves before putting one on their child.

Saving the child is the goal, but keeping yourself functional is the priority.

Sure, we're here to keep you safe, and a big part of that is making sure that we, your protectors are safe.

1: So if you look like a threat to us, we'll kill you.

2: If you disobey the central authority, even if your actions harm nobody, we'll threaten, then attack you until you're either compliant or dead,

3: If you're no apparent threat to us, and you're obeying the rules, then we'll protect you from harm after you've been harmed, by attacking the people we think attacked you.

Using humans in lieu of robots would leave scope for a double standard; the protectors would take great pride in being undiscriminating extensions of the central authority; but when being resisted, they could claim all of the intrinsic value of being fully human.

When I attack you, it's not really me attacking you, it's the central authority. I'm simply the remorseless physical manifestation of it's unflinching resolve.

When you attack me, I'm a loving father of two, with elderly parents and a samoyed. I write poems for my wife, and play badminton on Thursdays.

There are of course, downsides to hiring humans. Their hesitation to sacrifice the freedom of themselves and their loved ones would very much limit just how onerous the directives from the central authority could be.

The authority's attack on human freedom in the name of human safety, would then need to be a long term project, playing out over several generations.

The central authority would need to control the rhetoric, representing individual freedoms as reckless and irresponsible, and clearly culpable for any tragedies.

In years where traffic fatalities increased, the deaths would be used to justify new, stricter restrictions; while in years where traffic fatalities decreased, the number of lives saved would be used to vindicate previously imposed rules.

The recurring theme being that injury and death are caused by excessive freedom, and that peace and tranquility are achieved by strong central control of the individual.

A generation or two after being imposed, each rule would be accepted as self evidently necessary, and the new batch of young protectors would feel no hesitation in enforcing it to the letter.

Like the frog in the slowly boiling water, the central authority would inexorably march us toward complete domestication, largely unresisted, on a road paved with shattered freedoms.

Have a great day.

Matt!

You've outdone yourself this time. Freakin' brilliant article! :D

😄😇😄

Re-Steemed! :)

Thanks, creatr :)

I've mulled this one over for a while now. Sometimes you just have to wait until you're happy with it.

I'm happy with it... ;) Great job. I'll be re-reading more leisurely when I can... :)

Score! for the free humans.

Thanks for the post. Followed.

Thankyou mate. I'll have a look at your work.

Here there be monsters.

Following you now.

Thank you.

We could end up with a robot church; like in Futurama.

Wonderful article I liked and I wish you success ...

RT with this!

I'm going to have a giant pile of dead robots all over my yard. Like an old auto salvage. Rusted shitpiles everywhere full of 50cal. holes and covered in gobs of old sun-baked and decomposing expanding foam. Robots are and always will be total pieces of shit, every fucking one of them.

Necessity is the mother of invention always and

for every action there is an equal reaction so

no worries ladies, the monkey wrenches are already on it.

Great post @mattclarke, upvoted, resteemed, and following.

Thankyou sprerku. Always nice to hear a new, clear voice under the anarchy tag.

I'll have a look at your content.

Following you now.

Yep we are all here to be fleeced just enough to not start a revolution, and the few who don't like it get picked off and silenced. Great read thanks

Thanks Silverbug, there are certainly aspects I wanted to touch on, but I'm hesitant to make the post too long.

The filtration processes that remove the non-domesticated from society and uniform, would make an interesting extension of this topic.

I'd love to find some data on the ratio of ex policemen in the fire department, vs the ratio of ex firemen in the police department. Considering I've never seen a fire department advertise vacancies, I think we could take a good guess at the result.

What would be a better question would be, how many anarchists are ex military?

I'm ex Army. No active service, though, happily.

Surprise, surprise I would of never guessed, lol. Guess what, me too. What corp were you in?

Artillery, (air defense) back in 96. Initial training after boot camp was in Manly.

It's now the Biggest Loser house. Beautiful spot on the cliffs.

Yourself?

A drop short,lol. I was a meat head, dumb grunt in 8/9 RAR 87-90.

There is always hope. I wonder if the 3 laws of robotics can be embedded deep into the fabric of AI. We are starting to see higher orders of abstraction emerging in computers and AI. With us, we are composed of atoms, molecules, cells, organs etc. and each has a corresponding inner dimension. With computers, there are 1s and 0s and algorithms and new emerging properties such as smart contracts? With us, conscience and ethics emerges from below or manifests from above, perhaps both at the same time. Perhaps Asimov's laws can be embedded in an AI emergent that just hasn't manifested yet.

I don't think we want those specific ones.

What laws do you think would deliver the best outcomes?

The challenge with laws is that they are black and white. I wonder if researching sci-fi writers for such shows as Star Trek and the character Data would be helpful. Asimov's 3 laws could be part of it but I think some yet to have emerged technology needs to come into play, even now, programming still in binary at its fundamental level. Saying that, thinkers such as Stephen Hawking are quite concerned about AI as your article points to.

I'm more worried about people who think they're robots than actual robots tbh.

I am worried about humanity as automatons, as machines, that carry on without consciousness and without conscience. Many atrocities and evils are committed by those who are asleep, or sleep walking...in particular to your article, if we dress them up in armour and weapons what can we expect?

The computer between our ears is more powerful than you can imagine if you turn off the TV and the Radio for a whole week you will experience your brain reboot and come alive. The AI will never be able to outsmart us humans...

Nice post matt - up-voted and resteemed. SK.

Very gallant of you, Sir Knight :)

Awesome post. Isaac Asimov is truly wonderful and through his stories unveils controversial topics. The last question is one of my favorites. Follow because it was a brilliant post. Regards!

Thankyou clacrax. I struggle to know how to end my posts, but I'm happy with how I rounded this one out.

I'll head over and give your stuff a look.

Followed

This post has been ranked within the top 80 most undervalued posts in the second half of Jun 16. We estimate that this post is undervalued by $18.90 as compared to a scenario in which every voter had an equal say.

See the full rankings and details in The Daily Tribune: Jun 16 - Part II. You can also read about some of our methodology, data analysis and technical details in our initial post.

If you are the author and would prefer not to receive these comments, simply reply "Stop" to this comment.

Steady on. We're not even 24 hours in :)

Reminds me of something.

Chappie is one of my favorite movies

I haven't seen Chappie, tbh. It looks a lot like District 9, which is a great movie. I'll give it a look :)

I still need to watch district 9! I learned they made the alien noises by rubbing pumpkins together. :D

Great movie.

Very interesting post. Your last few paragraphs read like a history of the last century. Maybe that was the point.

You may be the first Aussie anarchist I've met. Good to know there are at least a few :)

I shared on facebook, and so many of my friends thought I was talking about robots. It may have been a little too subtle.

Appreciate the feedback. There are definitely a few of us around :)

Amazing the way in which you outline the possibilities of a definitive worst case scenario if the laws are enforced in a reverse order.

Brilliant, Have a good day!!

Upvoted and followed! Great work. Very well constructed, and best of all, truthful!

Thankyou. Glad to see some quality ancaps still here.

As far as robot laws go, how about once they go full Turing-Test-passing AI, we have them follow the non-aggression principle? It may be the only way to ensure they don't eradicate us peaceful folk.

What if they know that's the point we get nervous so they've been deliberately failing it?

This is certainly one thing an intelligent machine could do. Thanks for the insight. I've considered the notion that AI's would eventually learn to deceive quite well, but never thought about the actual lies they might tell.

Two of my favorite subjects. Human freedom and robots. Followed!

Thanks for the resteem too.

Following you right back, I'm your 101st :)

Great post!

So nice, i've always liked the Sci-fi movies with Robots especially! Hopefully u can check this blog post of Blade-Runner too. It's quite a wesome. Upvoted

This was truly a great read. Thanks to @anonymouser I go to read this old piece. Thank you both!

Glad you enjoyed it :)