Below is a list of Hive-related programming issues worked on by BlockTrades team during last week or so:

Hived work (blockchain node software)

Continued development of TestTools framework

We made a substantial number of changes to the new TestTools framework that we are now using to do blackbox testing on hived: https://gitlab.syncad.com/hive/hive/-/merge_requests/278

There are too many changes to discuss individually (see the merge request for full details), but at a high-level, the new testing framework allows us to perform more complex tests faster and more efficiently than the previous framework.

Command-line interface (CLI) wallet enhancements

We continued work on improvements to CLI wallet (refactoring to remove need for duplicate code to support legacy operations, upgrading cli wallet tests to use TestTools, support for signing of transactions based on authority) and I expect we’ll merge in these changes in the coming week.

Continuing work on blockhain converter tool

We’re also continuing work on the blockchain converter that generates a testnet blockchain configuration form an existing blocklog. Most recently, we added multithreading support to speed it up and those changes are being tested now. You can follow the work on this task here: https://gitlab.syncad.com/hive/hive/-/commits/tm-blockchain-converter/

Hivemind (2nd layer applications + social media middleware)

Reduced peak memory consumption

We reviewed and merged in the changes to reduce memory usage of hive sync (just over 4GB footprint now, whereas previous peak usage was approaching 14GB). Finalized changes that were merged in are here: https://gitlab.syncad.com/hive/hivemind/-/merge_requests/527/diffs

Optimized update_post_rshares

We completed and merged in the code that I mentioned last week that creates a temporary index for faster execution of the update_post_rshares function and we were finally able to achieve the same speedup in a full sync of hivemind on one of our production systems after some tweaks to the code (function with temporary index processed 52 million blocks in 19 minutes).

To achieve the same results that we saw in our development testing, we found we had to perform a vacuum analyze on the hive_votes table prior to running the update_post_rshares function to ensure the query planner had the proper statistics to generate an efficient query plan. The merge request for the optimized code is here:

https://gitlab.syncad.com/hive/hivemind/-/merge_requests/521

Optimized process of effective_comment_vote operation during massive sync

When we know that a particular post will be paid out before end of massive sync block processing, we can skip processing of effective_comment_vote_operations for such posts. This optimization reduced the amount of post records that we need to flush to the database during massive sync by more than 50%. The merge request for this optimization is here: https://gitlab.syncad.com/hive/hivemind/-/merge_requests/525

Merged in new hive sync option --max-retries

We also finished review and testing of the new –max-retries option that allows configuring how many retries (or an indefinite number of retries) before the hive sync process will shutdown if it loses contact with the hived serving blockchain data to it: https://gitlab.syncad.com/hive/hivemind/-/merge_requests/526

Optimized api.hive.blog servers (BlockTrades-supported API node)

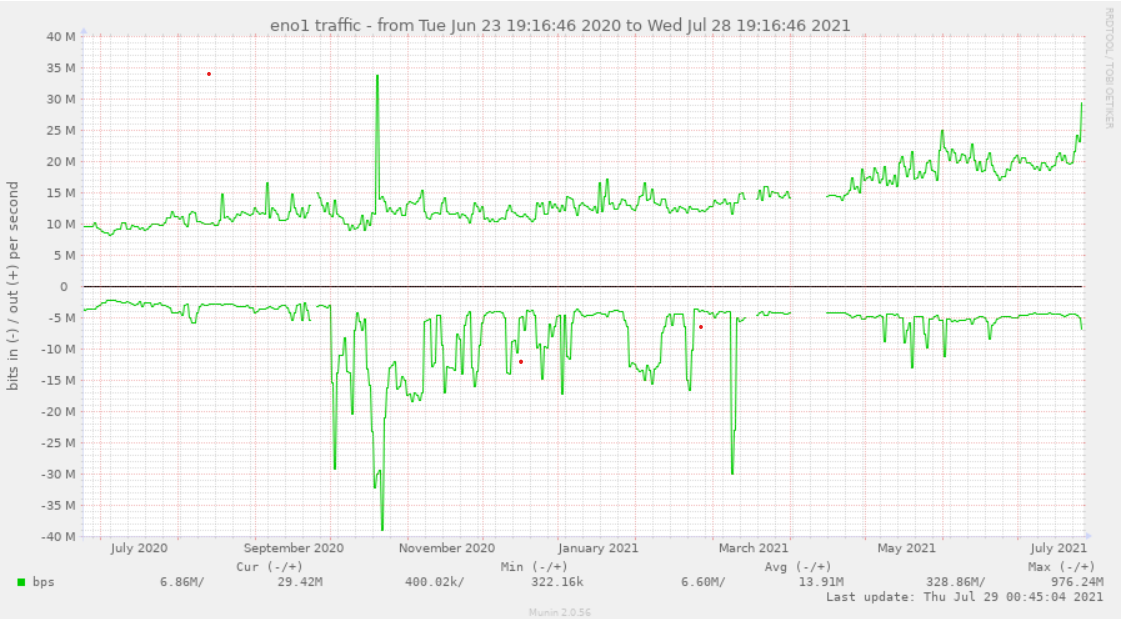

While there are number of API nodes available nowadays, many Hive apps default to using our node (which is often useful, since it allows us to quickly spot any scaling issue that might be arising as Hive API traffic increases with time). Here is a graph of how that traffic has increased over the last year:

As you can see, our incoming API traffic (the top part of the graph) has roughly tripled over the year.

Solved issue with transaction timeouts

Yesterday we started getting some reports of timeouts on transactions processed via api.hive.blog. The immediate suspicion, of course, was that this was due to the increased traffic coming from splinterlands servers, which turned out to be the case.

But we weren’t seeing much CPU or IO loading on our servers, despite the increased traffic (in fact, we had substantial headroom there and we could likely easily handle 12x or more than the traffic we were receiving based on CPU bottlenecking just with our existing servers), so this lead us to suspect a network-related problem.

As a quick fix, we tried making some changes to the network configuration parameters of our servers based on recommendations from @mahdiyari, and we had a report from him that this helped some, but we were still getting reports of a fair number of timeouts, so we decided to do a more thorough analysis of the issue.

To properly analyze the network traffic, we first had to make some improvements to the jussi traffic analyzer that we use to analyze loading, because most of the increased traffic was encoding the nature of the request in the post bodies, which means the details of the request weren’t seen by the analyzer tool (e.g. we couldn’t see exactly what types of requests were creating the network problems). With these changes, the jussi analyzer can now distinguish what type of request is being made, allowing us to see which type of requests were slow and/or timing out.

After making this change, we found that the requests that were timing out were mostly transaction broadcasts using the old style “pre-appbase” format. Requests of this type can’t be directly processed by a hived node, but we run a jussi gateway on api.hive.blog that converts these legacy-formatted requests into appbase-formatted requests (effectively making our node backwards-compatible with these old style requests).

So this was our first clue as to what the real problem was. After investigating how our jussi gateway was configured, we found that the converted requests were being sent to our hived nodes using the websocket protocol, unlike most other requests, which were sent via http. So, on a hunch, we modified the configuration of our jussi process to send translated requests as http requests instead of web socket requests and this eliminated the timeouts (as confirmed by both the jussi analyzer, beacon.peakd.com, and individual script testing by devs).

At this point, our node is operating very smoothly, despite signs that traffic has further increased beyond even yesterday’s traffic, so I’m confident we’ve solved all immediate issues.

Replacement of calls to broadcast_transaction_synchronous with broadcast_transaction

Despite handling traffic fine now, I’m also a bit concerned about the use of broadcast_transaction_synchronous calls. These are blocking calls that tend to hold a connection open for 1.5s or more on average (half a block interval) because they wait for the transaction be included in the blockchain before returning.

I’ve asked the library devs to look into changing their libraries to begin relying on the newer broadcast_transaction operation (a non-blocking call) and use the transaction_status API call to determine when their transaction has been included into the block, which should eliminate the large number of open connections that can occur when many transactions are being broadcast at once.

@mahdiyari has already made changes to hive-js (the Javascript library for hive apps) along these lines (as well as replacing the use of legacy-formatted API calls with appbase formatted API calls) and apps developers are now beginning to test their apps with this beta version of hive-js. Assuming this process goes smoothly, I anticipate that other library devs will swiftly follow suit.

Hive Application Framework (HAF)

Some enhancements to HAF have been made to support synchronizing of multiple Hive apps operating on a HAF server. This allows an app that relies on the data of other apps to be sure that those apps have processed all blocks up to the point where the dependent app is currently working at. This enhancement also involved support for secure sharing of data between HAF apps on a HAF server (for example, a dependent app can read, but not write, data in the tables of the app it depends on).

As I understand it, we now have a sample application built using HAF, but I haven’t had a chance to review it yet, as I was busy yesterday analyzing and optimizing our web infrastructure with our infrastructure team as discussed above. But this is high on my personal priority list, so I plan to review the work done here soon.

What’s next?

We’ve resumed work on the sql_serializer plugin, which is one of the last key pieces needed before we can release HAF. Once those changes are completed, we’ll be able to do an end-to-end test with hived→sql_serializer→hivemind with a full sync of the blockchain. Perhaps I’m optimistic about the resulting speedup, but it’s possible we could have results as early as next Monday. I’m hoping we have some form of HAF ready for early beta testers within a week or two, but bear in mind that is a best case scenario.

Glad all the activity is helping to find the issues that will be helpful for the future

I'm using peakd right now with the updates and many transactions are many many times faster now

The replacement of broadcast_transaction_synchronous calls by broadcast_transaction calls will pay big dividends, both in terms of scalability to handle more traffic, and as you've noticed, in terms of app responsiveness and improved user experience. I really hope all Hive libraries quickly migrate to the new paradigm. I think in the long run we should remove support for broadcast_transaction_synchronous calls from hived.

Hello. I do not know if it is related to the increase in traffic created by splinter lands but since yesterday I am receiving the following error very frequently:

We think it's related to a new version of condenser using a new version of hive-js (new version is better/faster in general, but we're seeing this new strange behavior, so we're looking into it).

We think it's related to a new version of condenser using a new version of hive-js (new version is better/faster in general, but we're seeing this new strange behavior, so we're looking into it).

Yes, I have been aware of the updates and without a doubt there is a big difference in the speed of loading of hive.blog. For me it has been a difference from earth to heaven, since my connection is basically through 4G. But this 502 error has been bugging me a bit.

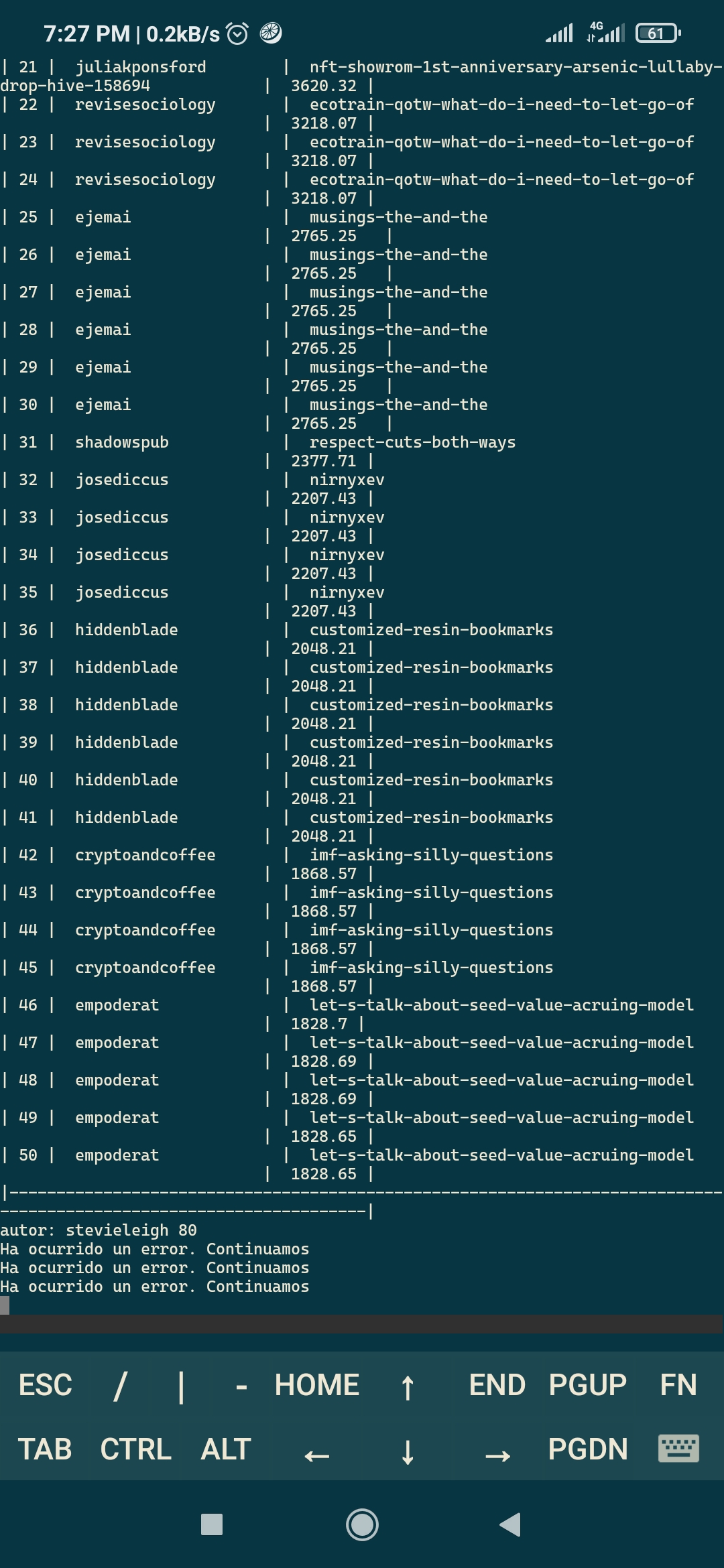

The other detail that I have observed is that the posts and comments are being repeated up to 6 times in some cases. I received 5 times your replies for the previous comment. I do not know if it will be a problem with the browser (Brave) which is the one I use most frequently. However, as my internet connection is limited and expensive, I have a small bot in a vps that makes a ranking of the best published posts so that I don't have to consume data to locate them, and the problem is that all the posts appear repeated many times. This is a screenshot of the script's output. it is not a program error, simply the api repeat the post

multiple times. I guess it is part of the same issue that was observed in the hive.blog comments.

Yup. I notice it loads and loads and then the comment appears for every time you hit refresh or retry

Yes, I have been aware of the updates and without a doubt there is a big difference in the speed of loading of hive.blog. For me it has been a difference from earth to heaven, since my connection is basically through 4G. But this 502 error has been bugging me a bit.

The other detail that I have observed is that the posts and comments are being repeated up to 6 times in some cases. I received 5 times your replies for the previous comment. I do not know if it will be a problem with the browser (Brave) which is the one I use most frequently. However, as my internet connection is limited and expensive, I have a small bot in a vps that makes a ranking of the best published posts so that I don't have to consume data to locate them, and the problem is that all the posts appear repeated many times. This is a screenshot of the script's output. it is not a program error, simply the api repeat the post

multiple times. I guess it is part of the same issue that was observed in the hive.blog comments.

I actually replied three times accidentally because of an issue where transactions sometimes look like they've failed when they haven't right now. I erased the extra comments.

We think it's related to a new version of condenser using a new version of hive-js (new version is better/faster in general, but we're seeing this new strange behavior, so we're looking into it).

We think it's related to a new version of condenser using a new version of hive-js (new version is better/faster in general, but we're seeing this new strange behavior, so we're looking into it). We will likely temporarily roll back the change.

The developers of Batman Arkham Knight and BeamNG Drive wants to know your location. 😂😂😂

I am one HIVE Dolphin that appreciates greatly all of the work you folks do! Thanks a whole bunch!

Really appreciate you and @mahdiyari putting in the effort on api.hive.blog and transitioning to the right broadcast api! Impressive result with the change to Jussi. Recent stats posts show a massive uptick in usage and I don't think we can even call this a scaling hiccup 😎 Will be nice to have the libs ready for the future and to help lighten the current networking reqs

Can't wait for HAF! Everything else is noise 😆

Super exciting.

Looking forward to HAF.

Sounds like you and your team are setting us up to have the ability to scale into the future.

Thank you for all of your efforts!

Congratulations @blocktrades! You have completed the following achievement on the Hive blockchain and have been rewarded with new badge(s) :

Your next payout target is 1250000 HP.

The unit is Hive Power equivalent because your rewards can be split into HP and HBD

You can view your badges on your board and compare yourself to others in the Ranking

If you no longer want to receive notifications, reply to this comment with the word

STOPTo support your work, I also upvoted your post!

Check out the last post from @hivebuzz:

Well done great work keep it up 👍❤️👍

As a CFA holder, I recommend that the graph was properly straight to the point of view. Please if it takes effect by that next week as I too is expecting it to work, just keep me updated

CFA = chartered financial analyst?

If so, I thought you guys only show your clients graphs that go upwards :-) But we can hope that our traffic graph stays inline with your expectations (unless some of the traffic starts getting diverted to other Hive API nodes).

Good morning @blocktrades

Hey I have two transfers that have issue. What is best way to contact? one isn’t shown as pending or anything. It seemed it was loading a long time but it was sent. The other says completed but there is no pending transaction in my wallet. Thank you so much! Anyone else having issues this AM?

Deleted - contacted direct

Congratulations @blocktrades! Your post has been a top performer on the Hive blockchain and you have been rewarded with the following badge:

You can view your badges on your board and compare yourself to others in the Ranking

If you no longer want to receive notifications, reply to this comment with the word

STOPCheck out the last post from @hivebuzz:

Sorry I have not been so active lately, but what is the time horizon for smart contracts?

There's currently two 2nd layer platforms for smart contracts: Hive Engine and DLUX.

In addition, BlockTrades will be developing a HAF-based smart contract engine, but we don't have a firm timeline yet as we haven't scoped the task yet (we'll start that work after we release HAF itself). But I have my fingers crossed that we can have something before the end of the year. HAF itself should solve most of the challenging issues associated with the development of the smart contract system (easy to program, highly scalable, robust, and a strong security system against rogue contract actions).

Congratulations, @blocktrades Your Post Got 100% Boost By @hiveupme Curator.

@theguruasia Burnt 6.286 VAULT To Share This (20% Fixed Profit) Upvote.

"Delegate @hiveupme Curation Project To Earn 95% Delegation Rewards & 70% Burnt Token Rewards In SWAP.HIVE"

Contact Us : CORE / VAULT Token Discord Channel

Learn Burn-To-Vote : Here You Can Find Steps To Burn-To-Vote

I'm glad to see progress on HAF. I'm looking forward to testing the beta version.

Great update as I had experienced the other day an issue related to hive node making transactions seems successful but it was not successful on the app side e.g. when MuTerra launched its pack sale and some of the signed up members weren't able to buy the desired pack and end up for a refund after the sale ended. It was a bit disappointing cause we lost the chance to buy it at that specific price while thinking that we have secured it then the next day it was refunded cause of the node issue in which it was out of there hands.

this shows that the developers and core team are working hard day and night to make sure hive is favourable to the community, when hive is favourable it will surely benefit everyone here both the community and the core teams because it will attract more communities people out there are watching and observing waiting for the best time to invest and also watching how secure the network is and also watching how dedicated the core teams and the developers is,but thanks and kudos to this team they are fantastic keep it up

:)

Congratulations @blocktrades! Your post has been a top performer on the Hive blockchain and you have been rewarded with the following badge:

You can view your badges on your board and compare yourself to others in the Ranking

If you no longer want to receive notifications, reply to this comment with the word

STOPI am not yet using the updates but all improvements that are made for the welfare of the platform and we users welcome, to update peak, I must first update the google chrome browser, is the message that gives me the team, but I have doubts about it I will consult with a friend to update to see how it goes.

Well done 👍👏

Keep it up ♥️